Guest Post: Who runs the Internet?

it’s definitely not girls, only 25% of people in tech are women

This week’s Dispatch is a special issue from Jeremy Kaish. He has a masters degree in computer engineering, which he says means he knows how computers are built and how those pieces work together. This means I ask him to help set up my new devices and sometimes fix my printer. Jeremy is a huge technology skeptic; he is a publicly disavowed crypto, NFT, and web3 hater (you might remember him from previous Dispatches) and generally suspicious of all the “disruptors” out there. He cares that people understand how technology works in their lives and has the rare ability to talk about it without making your ears fall off.1

If you’re interested in writing about the ways you see information and organization operating in your life or area of expertise, feel free to send a draft my way. I would be happy to share it with the Dispatch readership.

The AI takeover that has occupied so much of our collective cultural brain space2 is here: machine learning algorithms and artificial intelligence systems run many aspects of our day to day, recommending movies, helping us navigate, and sometimes even deciding who gets hired for a job or who receives bail, and yet we do not and cannot know how they work. There are many ways these computer systems make our society less equal (I highly recommend Cathy O’Neil’s Weapons of Math Destruction), but this essay will focus on social media.

I’m going to start out with a little technical background that I will try to keep as painless as possible. I promise it will come back around.

Caution: Unsupervised Computers at Play

The term “algorithm” gets thrown around a lot as a catch-all for “anything a computer does on its own,” which isn’t that far from the truth. In its most basic form, an algorithm is simply a set of steps to accomplish a task. A cooking recipe is technically an algorithm, as is a set of directions to get from one place to another. While these would be very specific algorithms, what most people are actually referring to when they say “algorithm” are artificial intelligence algorithms that have become almost omnipresent. These kinds of algorithms are powerful because rather than strictly following a prescribed set of steps, such as in the cooking recipe example, they leverage probability and advanced math models to adapt to new information and inputs.

Algorithms have exploded in the past decade because companies now have access to an overwhelming, incomprehensibly large glut of data. It is often said (correctly) that social media is free because we are the product—these companies collect data on our likes, dislikes, connections, location, behaviors, and whatever information is potentially profitable and sell it to marketing companies so that they can micro-target their ads to the people most likely to respond.3 Data has become the new oil.

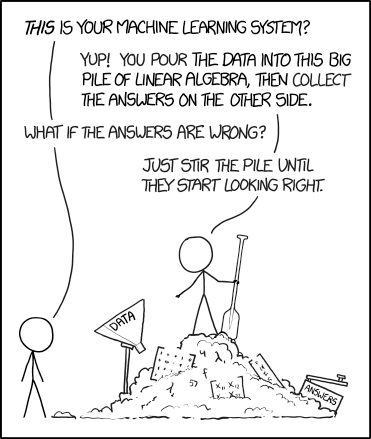

“But Jeremy, how does anyone comb through this heap of data to actually make use of it?” I’m glad you asked. Enter machine learning!

Broadly speaking, the goal of any machine learning algorithm is to make predictions. When, for example, Facebook automatically tags you in a photo, it is making a prediction that that is your face. And the model has a level of confidence for its prediction. In a system like Facebook’s, the engineers have decided on a level of confidence they want the model to maintain before automatically tagging someone, which is why not all people in a photo will be tagged.

In the nascent days of machine learning research, almost all algorithms of practical use fell into the category of supervised algorithms. For these algorithms, a human must label all the training data, which is the data fed to the model ahead of time so the algorithm can learn how to achieve its goal. The algorithm can then make predictions for incoming unlabeled data. A linear regression is one simple method of supervised learning that you may have encountered in a high school or college stats course. These kinds of algorithms can be quite robust because people control the data that goes into training them, but they are also often narrowly targeted due to the time-consuming and expensive nature of properly labeling data, making it impractical to produce large enough data sets of labeled data for more general algorithms.

The modern innovation that has enabled our current moment of algorithmic dominance, where vast troves of data can not only be collected but also turned into actionable information, is the advent of unsupervised machine learning. Unlike supervised learning, unsupervised learning requires that only a small portion, or even none, of the training data be labeled; instead, the algorithm, through a lot of complicated math I won’t get into, finds its own correlations in the data and uses that to make predictions.

This is where the problems begin. Because data is classified without human intervention, the system can come up with erroneous correlations and categorizations. Shira has already discussed at length the deficiencies inherent to categorization, so it doesn’t take too much imagination to see how a poorly built categorizer based on already-rocky categories can quickly lead to disaster. A particularly egregious example was when Google’s automatic image tagging system was found to tag Black people as gorillas. Horrible errors like this can occur when the creators of a machine learning system don’t pick their reward functions or training data carefully.

A reward function is a mathematical expression of what the designer wants the system to optimize for. A system used to determine loan eligibility probably wants to maximize the likelihood that the recipient won’t default, while a system that recommends movies may want to maximize the similarity of its suggestions to movies you’ve already watched and liked. Reward functions must be chosen carefully for machine learning systems to achieve the goals they’re designed to do.

While a good reward functions is very important, perhaps more important is the training data on which the system will base its decisions. There is a common misconception that decisions made by a computer are inherently unbiased because a computer is making them (“AOC SNAPS: World Could End In 12 Years, Algorithms Are Racist, Hyper-Success Is Bad” read the headline of one Daily Wire article, which I won’t link because that will only further promote it).4 It’s a kind of tautology that immediately falls apart upon the merest inspection. Computers are not objective agents; they learn their tasks from data we provide them, which is why machine learning systems have been shown to discriminate against people with disabilities in hiring, people of color in mortgage applications, and Black students in essay grading. Without thoughtful design, machine learning systems will learn our biases and reflect them back multiplied by a thousand. Computers, after all, can only do exactly what they’re told, and they learned from the best.

And this, at last, brings us back to social media.

“There is nothing so useless as doing efficiently that which should not be done at all.”5

The goal of any social media algorithm is to keep you on the platform. By suggesting videos you may like or friends to connect with or posts you may not have seen, the algorithm aims to keep you on the platform to continue to see ads, which actually make these companies money. Conspicuously missing, however, is any consideration of whether or not something is worth showing at all.

In one of my favorite scenes from the 1971 film Willy Wonka & The Chocolate Factory, a man has designed a machine that will tell him the location of the remaining Golden Tickets. The computer, however, refuses to divulge the tickets’ locations because “that would be cheating.” If such a machine existed today, I would not be writing this Dispatch.

To be usable by a machine learning system, which, again, is effectively an enormous pile of equations, data about us has to be distilled into numbers and categories. Likes and dislikes, places you’ve been and websites you’ve visited: Those are easy to quantify and readily supplied by the user. Morality and truthfulness—concepts that exist in uncountable shades of gray—are difficult to quantify, so social media companies don’t even try. As a result, social media algorithms have no understanding of the ethical difference between a cat video and a piece of 2020 election denialism, only how similar those two posts are to other pieces of content you’ve interacted with.

Unfortunately, this behavior is a feature, not a bug. Social media serves not only as a platform for bigoted ideologies, but as one of their greatest promoters. Through the slightest brush with a conspiracy theory, or even just self-identifying as Christian and Conservative, an unsuspecting user can be led on a downward spiral into radicalization, all because the system’s prime directive is to keep you on the platform. To paraphrase the lyrics from Bo Burnham’s Welcome to the Internet that haunt me the most: “it did all the things we designed it to do…it was always the plan to put the world in your hands.” This is then followed by a stomach-churning cackle.

Upsettingly akin to how fossil fuel companies knew about climate change as early as the 1970s, this problem is well-studied by its perpetrator but has been addressed with little more than lip service. Facebook has found through its own internal research that its site promotes polarization and has chosen to do nothing about it. The Wall Street Journal published an extensive series on Facebook’s wide-spread rot last year based on internal documents. Of particular note to us, the WSJ writes that Mark Zuckerberg has resisted proposed changes to Facebook’s algorithms because of fears that it would lead people to interact with the platform less. A computer can only do what it’s told, and the directive to keep people on Facebook at all costs starts at the top.

The next question an astute reader may ask is how are social media companies permitted to allow content that is blatantly false. Well, it turns out there’s a law for that.

“I’m sorry Dave, I’m afraid I [can] let you do that”

The law that allows social media to exist as it does today is Section 230 of the Communications Decency Act, passed in 1996. This statute states that “no provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.” In other words, platforms that host content created by a third party cannot be held liable for that content.

Broadly speaking, this law is a good thing. Free speech advocates argue that without this law, websites that host user generated content, such as Facebook, Twitter, and YouTube, would either be bombarded with a never-ending stream of lawsuits stemming from the posts of their users or would have to adopt such stringent content moderation policies that anything but the most benign comments and posts would be taken down. The problem, however, is that the pendulum has swung too far the other way.

Due to these protections, only the most egregious violations of common decency and community standards, usually of sexual or graphic nature, result in deletions. (This high threshold for violatory content creates a grueling job for the human content moderators who have to verify that flagged content is actually impermissible, often at the expense of their mental health.) There are many kinds of content that do not meet this standard, though, such as lies about the 2020 election, Covid 19 vaccines, the birthplace of Barack Obama, and more. Thresholds for content deletion may also be unevenly enforced, as was demonstrated on Twitter during the Trump presidency.

Tech moguls such as Mark Zuckerberg or Elon Musk, our modern-day oil barons, will say that their websites (Facebook and Twitter-to-be, at the time, and maybe again now) are “digital public squares,” where people from all over can gather and share their opinions, and as such the platform should moderate as little as possible to help promote the free exchange of ideas. To an extent, this is true; the internet and social media has connected us in ways we couldn’t even imagine 20 years ago. What these mediocre white men miss, however, is that free speech is not the same as free reach.

The freedom to say whatever you want does not also mean millions of people, or anyone at all, have to hear it. With the help of any given social media site’s algorithm (or some money to pay for promotion), a user’s post can extend vastly beyond their circle of friends or followers. It’s not very difficult: my brothers and I created several TikToks in our basement that amassed hundreds of thousands of views each during spring and summer of 2020. Now consider that there are entire enterprises out there that create clickbait designed to go viral. By piggybacking on the technology that gives me an endless stream of cartoon fan art and dunks on Eric Adams, these bad actors are able to spread their malice further and more easily than ever before.

Mr. Zuckerberg, tear down this algorithm!

The only silver lining to the falsehood-ridden conservative media outlets such as Fox News and Breitbart is that there is a real, living, breathing person behind everything that is aired or published. For better or (usually) for worse, an actual person decided, “yes, we are going to talk about [insert moral panic of the week] today.” I find solace in this because maybe a person making these decisions will have an epiphany and realize the damage they are doing and reverse course. These outlets also provide a person we can point to and say “stop being racist/sexist/xenophobic/transphobic/etc.!!” It’s not a very large bright spot, but it’s there.

The kind of misinformation found on social media, however, has no such recourse. An algorithm has no understanding of morality—it is such a sticky concept, so difficult to quantify—only of views, likes, shares, and most importantly, if a particular piece of content keeps you on the app. Fake accounts and bots can come and go in the blink of an eye. So who will we blame when someone dies from Covid after refusing to get vaccinated based on information served to them by social media algorithms? Social media companies hide behind their algorithms as a way of diffusing and deflecting responsibility.

Created by opportunists and amplified by algorithms, the kind of falsehoods that run rampant on social media are the result of, at best, negligence, and at worst, willful ignorance. Social media companies have shown time and again that they cannot be trusted to put the wider public interest above their own bottom lines. It took an insurrection in Washington D.C., where the Capitol building was breached by hostile actors for the first time since the War of 1812, for Twitter and Facebook to reach their breaking point.

Ultimately, artificial intelligence is a misnomer. There is nothing intelligent about a computer. It cannot come up with something completely original, it can only take its training data and shuffle and combine it in new and sometimes interesting ways (this has come up a lot in the currently-hot debate about AI art). To be clear, the ability to sort through mounds of data and produce actionable insights is very useful; for example, advancements in image processing have enabled AI systems to detect cancer in MRI scans that the human eye may have otherwise missed. What makes these kinds of systems useful, though, is that they are a supplement to human expertise, not a replacement. An AI image scanner can tell a doctor to take another look, but it doesn’t make the final diagnosis. The systems currently deployed in social media apps have no such guides. They are the final say, which is why they so easily run afoul.

Machine learning systems aren’t going anywhere; they are too lucrative and powerful to be cast aside. Their reach will continue to expand in the name of “efficiency” or “fairness” or “unlocking the potential of your clients” (these are all buzzwords I’ve seen for AI startups on the subway, and if you’ve reached this point in the Dispatch, I hope you too will now roll your eyes at these highfalutin promises). Computers are neither a silver bullet nor the bringer of the apocalypse. They are a tool, and, like all tools, their usefulness depends on who wields them. Our politicians are finally catching up to the state of AI and are introducing legislation to help compel greater transparency and equity, but in the meantime, we must all have a discerning eye. What would a computer do with a lifetime supply of data? If we don’t like the answer, then why are we feeding it?

housekeeping and birdseeking

house

What Jeremy read last week: Pride and Prejudice by Jane Austen (Austen Autumn, continues!)

What Jeremy is currently reading: Detransition, Baby by Torrey Peters (10/10 recommend)

bird

Jeremy was recently in Arizona and was asked by a tour guide what a group of ravens is called, and he replied, “A conspiracy!” and the tour guide responded, “You are the first person who has ever known the answer.” Breaking glass ceilings, people!!

More later.

Source for the stat in the subhead: Girlies (affectionate) are not in tech.

When discussing AI apocalypses, I always return to this quote from author Monica Byrne, which could be an additional Dispatch.

Some people like seeing ads that are tailored to them. I do not. uBlock Origin is my ad blocker of choice.

One of the first ways Google sorted search results was by using the PageRank algorithm, which, among other things, takes into account how many other pages link to a given page to determine the given page’s priority in search results. While this would only be one link and Google’s sorting algorithms are much more advanced now, I won’t be responsible in any way for promoting that drivel.

Peter Drucker, “the father of modern management.” I’ll leave it up to you if “modern management” is a good thing or not.

Excellent guest showing, adding weapons of math destruction to my book list